Author: Megan Humburg

Key Ideas

- Teachers and students should have some foundational rights in the age of AI that protect their privacy and agency in the classroom

- We need to hold companies that develop AI tools for education to the same high ethical standard that we hold ourselves to as educational researchers

- Conversations across different fields (like education and political science) can help us brainstorm how to design a better future for the ethical use of technology

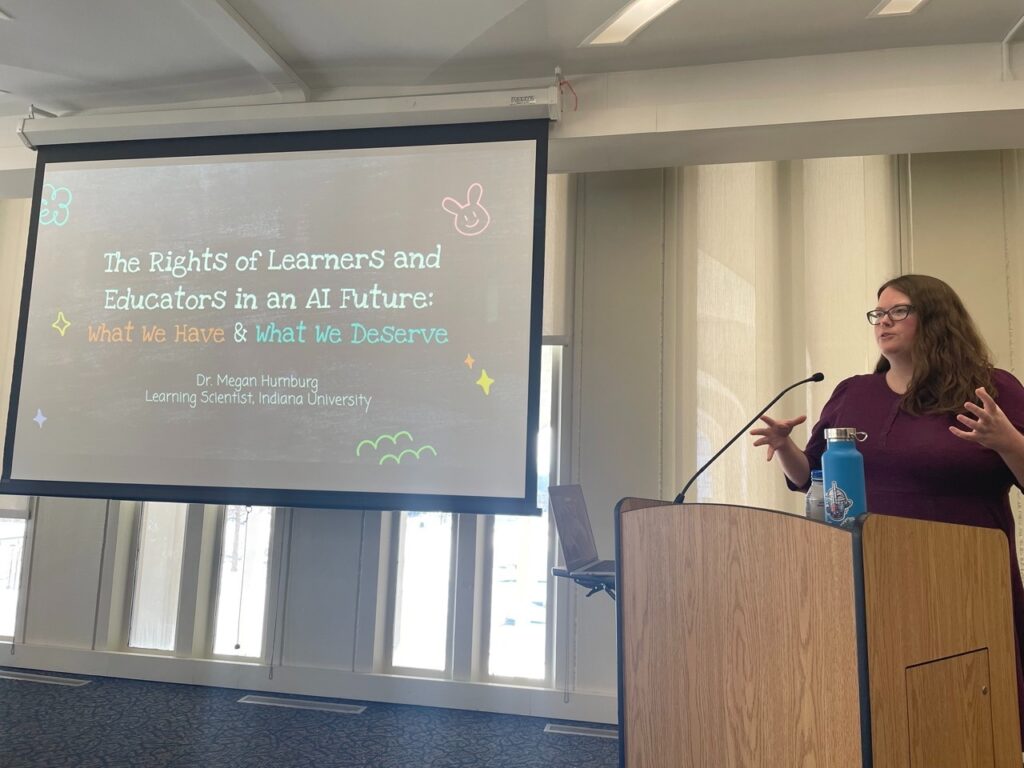

I recently visited the University at Albany to give a talk in an ongoing series about Artificial Intelligence (AI) and Democracy. When I first received the invitation, I had a bit of an imposter syndrome moment – what could I possibly have to say about democracy as a learning scientist? Sure, I have a good amount of knowledge about designing AI-supported learning environments, and I know quite a bit about the ethics of using AI, but talking about technology through the lens of democracy and politics felt like taking a large step outside of my academic comfort zone.

However, as I reflected on my own experiences working with teachers and students, I realized that I didn’t need to be a political expert in order to have something to say about how AI could impact our democracy. I talk to teachers and students all the time about how new AI technologies are impacting their daily lives, from concerns about cheating and data privacy to hopes for more engaging, exciting ways to learn together. This is the place where politics becomes personal, where AI policies have practical impacts on how we teach and learn. At its core, democracy is about people. It is about the power we have when we come together and how we exercise that power to make our society better. I realized that talking about how AI impacts democracy is really just talking about how AI impacts people. And that is something I know plenty about.

At its core, democracy is about people. It is about the power we have when we come together and how we exercise that power to make our society better. I realized that talking about how AI impacts democracy is really just talking about how AI impacts people.

In talking with my learning sciences colleagues, I decided to frame my talk around ethical principles that researchers are already deeply familiar with – the governing guidelines from the Institutional Review Board (IRB). If you’re not familiar with the IRB, this is the group of people at each university who decide whether or not a proposed research project is allowed to collect data. All educational researchers have to get IRB approval before conducting research with people, because this process is designed to make sure that research participants are being treated fairly and ethically. IRB requirements rely on three key ethical principles: Respect for Persons, Beneficence, and Justice. Respect for persons means that we give our participants all of the information about our study, and they have full autonomy to accept or reject the opportunity to participate. Beneficence is the idea of maximizing the benefits of our study and minimizing the potential harms. Justice means that we make sure the benefits of our research are fairly distributed to everyone.

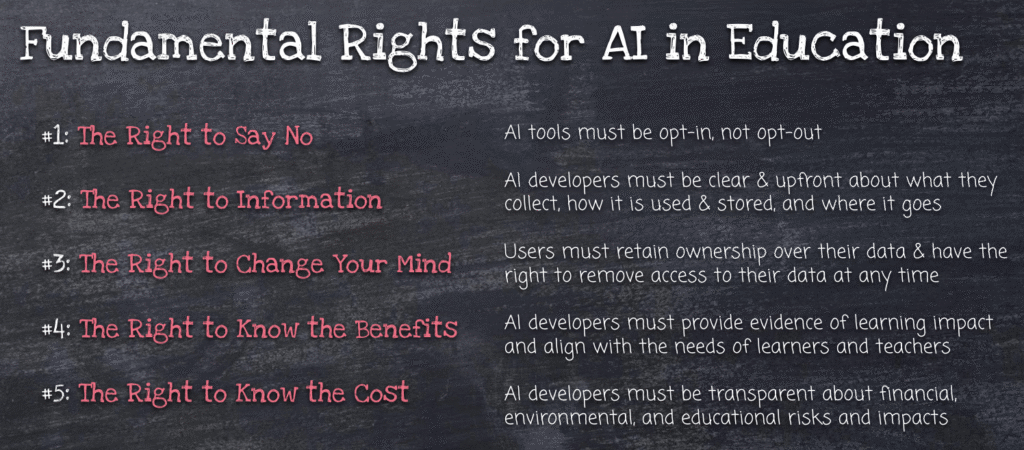

Based on these ethical principles, I asked the question: What would it look like to hold AI developers and tech companies to the same high standard of ethics? Based on this question, I came up with five fundamental rights that I argue anyone developing AI tools for education should be required to follow.

The main takeaway from this presentation was that, from my perspective, when AI companies try to sell a new tool to classrooms without enough evidence of how the tool impacts learning, they are conducting what amounts to unethical research on students and teachers. AI technologies are changing so rapidly these days, and companies are pushing for more and more people to use their tools, but the users of AI tools often don’t have access to information about the benefits, the potential harms, and the data handling policies that the tools use. If this information is available, it is usually hidden in dense, hard-to-understand privacy policies. But as educational researchers, we know it is our responsibility to clearly communicate the benefits and potential harms of our studies. We don’t leave it up to the users to figure out whether or not their data is safe, and we don’t downplay potential risks in order to persuade people to participate. Above all, we make sure that the final decision of whether to participate (and how to participate) remains solely with students and teachers.

The audience for this talk was a mixture of fields, from instructional design and learning sciences to political science and public policy. I also sent the recording of the talk to a few teacher friends to get their take on the idea of student and teacher rights in the age of AI. It was so fascinating to see people from different walks of life and fields of research were thinking about AI ethics in surprisingly similar ways. It reminded me that so many of us are trying to solve similar problems, and that we can come up with better solutions for how to protect the rights of students and teachers when we open up the conversation to many different kinds of expertise.

If you’d like to learn more about the AI and Democracy speaker series hosted by Dr. Haesol Bae & Dr. Stefan Kehlenbach at the University at Albany, check out their posts on LinkedIn here.