Author: Dr. Dalila Dragnić-Cindrić and Dr. Megan Humburg

Key Ideas:

- Intentional design of artificial intelligence (AI) agents can support students’ scientific inquiry as they play a game, shifting AI’s role away from providing answers.

- Our approach was to create an AI-based helper agent or a feedback agent, which both support student inquiry.

- Simultaneously, our approach provides insights to the classroom teachers, helping them to engage with students around concepts and the process of inquiry.

Dr. Dalila Dragnić-Cindrić and Dr. Megan Humburg, members of the EngageAI Institute’s research team, discuss their approach to integrating AI-driven agents into educational science games.

Dalila: Megan, why do we design science learning games, and what do you want teachers to know about the thinking that goes into the design?

Megan: We’re interested in science learning games because stories and narratives help students engage in scientific inquiry in playful ways. Playful learning encourages kids to explore science in ways that are emotionally engaging and collaborative. We bring AI agents into science learning games to better support that collaboration and scientific inquiry.

Play is engaging, but students need guidance to make it lead to science learning. In a classroom of 30-something kids and one teacher, the teacher can’t be with every student at every moment to guide their play through these open-ended learning games. And yet, we don’t want students to feel confused about what they should do to learn. The role of the agent is to help kids understand where to go, what to do, how to begin this inquiry process, and how to make sense of the data and ideas they are exploring.

Dalila: Can you give me an example, perhaps based on the recent design case paper you’ve written for EngageAI?

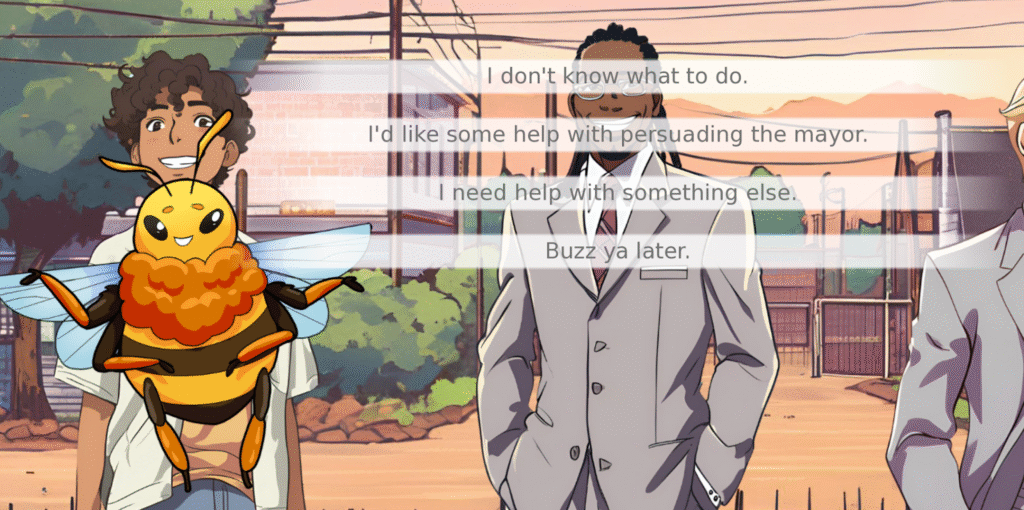

Megan: Sure. We made SciStory: Pollinators, a game where students explore a community with an empty lot. Different neighbors have different ideas about what to build on this plot of land, and the students face a dilemma: What do we do with this plot of land, and how do we convince the town’s mayor that our plan is the right one?

At the heart of this story is an important scientific issue about pollinators and community gardens. Students explore the community, talk to neighbors, think about their opinions, and gather evidence about pollinators, community gardens, parking structures—all the different things that could be built. The AI agent helps students gather information, make sense of it, and build a persuasive argument for the mayor.

Dalila: Games can be very complex, involving different aspects of knowledge from scientific content knowledge to strong argumentation components. Students must carefully consider the evidence and structure their arguments well. What stood out to you in terms of designing these various agents?

Traditional AI agents are often just enormous collections of knowledge focused on answering questions. They can answer any science question on earth, but they weren’t necessarily skilled at guiding student inquiry. We realized we needed an AI agent that was not just a wealth of knowledge but a facilitator.

Dr. Megan Humburg

Megan: One of the most interesting things for me was shifting the focus of AI. Traditional AI agents are often just enormous collections of knowledge focused on answering questions. They can answer any science question on earth, but they weren’t necessarily skilled at guiding student inquiry. We realized we needed an AI agent that was not just a wealth of knowledge but a facilitator.

Dalila: So, AI needs pedagogical support somewhat like the supports teachers need to switch roles from the “sage on the stage” to the “guide by your side.”

Megan: Yes. We had to figure out how to get an AI agent to facilitate student inquiry instead of just giving them all the answers. This led to a big insight! We realized we needed two kinds of agents–feedback agents and helper agents. These agents evaluate students’ arguments, not by telling them what a better argument is, but by giving them guidance like how to be more specific or incorporate more evidence.

They give students the agency to find the evidence on their own and synthesize the ideas. The design challenge becomes creating an agent as a teacher facilitator, rather than making it know everything.

Dalila: Absolutely. Our student focus groups showed that our middle schoolers really appreciate the support teachers provide, not just around content, but all the different ways they support a student’s own inquiry. How do we support teachers by adding this agent as a teacher’s helper?

An AI agent can give students some scientific ideas about pollination and gardens so that the teacher doesn’t need to answer every single science question, but the teacher can still guide students through the more difficult conceptual work and a lot of the emotional and social work.

Dr. Megan Humburg

Megan: I think that speaks to this broader conversation of whether AI will replace teachers, and our answer is a firm “no!”

Our conversations with teachers in these focus groups were not about putting their skills into an AI agent. Instead, it was about designing scaffolds so that agents can answer low-level science questions.

An AI agent can give students some scientific ideas about pollination and gardens so that the teacher doesn’t need to answer every single science question, but the teacher can still guide students through the more difficult conceptual work and a lot of the emotional and social work. For example, our AI agents can evaluate an argument and say where it is not specific, but the agents don’t provide the emotional support that the skilled teacher does when a student is frustrated.

Dalila: I agree. I see the AI agents as collaborators who work with the teachers or the students, but definitely not as a replacement function, and certainly not for emotional support!

We also heard from the teachers that they wanted students to maintain agency over their own learning. We designed Tulip, our helper agent for students. The teachers wanted Tulip to be available with a click, but in gameplay, students weren’t using that option as much as we’d hoped. So, there is a tension—how to adequately support students’ agency without overwhelming them or completely giving up control.

Megan: We found that although we designated Tulip as a helper, students treated all the characters they encountered as fully fleshed out people who could answer any questions. For example, they’d ask Nadia, the beekeeper, “Hey, how can I convince the mayor? How do I make my argument better?”, but she was designed to answer science questions.

We realized that all of the agents needed to be ready and capable of handling these help-seeking questions more flexibly, because students anthropomorphize these agents and expect constructive feedback just like they would get from a teacher in real life.

Dalila: Students bring their expectations to the classroom—from real life, like you mentioned, but also from other games. They expect a certain quality of graphics or the ability to do certain things.

We had to deal with the challenge of expectations, like when students expected a 3D game. It was wise to switch to a 2D, comic book-like design to evoke a different type of interaction, so we weren’t pitted against commercial games like Fortnite. This allowed us to focus on the character interaction and evidence gathering that supports inquiry and use AI agents to create highly productive learning engagements. We wanted agents who would help guide the students in the right direction or gently nudge them away from unproductive engagements.

Megan: To encourage productive learning, we modeled our AI-agent conversations after skilled teacher facilitation. We designed guardrails to make sure the agents won’t answer student questions like, “Can you write an argument for me?”

If a student tries to offload important cognitive work, the agent gives advice and guidance like, “Maybe you should talk to Nadia next,” or “Have you considered the bees?”

These “teacher facilitation moves” provide helpful guidance without giving all the answers.

Dalila: In focus groups, teachers also raised concerns about student safety and privacy, and the pedagogical appropriateness of the game. As game designers, how do we communicate all the steps taken to address these valid concerns?

Megan: That’s a really important question. With all of the AI tools out there, the key is to make things transparent and understandable, especially to folks who don’t have a tech background. Our agents use dialogue managers under the hood. Before the question ever gets to the agent, the dialogue manager checks if it is appropriate. If it’s inappropriate, it gets diverted, and the agent lets the student know, “That’s not an appropriate topic. Can you ask me something about gardens instead?”

The dialogue manager can flag the inappropriate language and notify the teacher. This technical layer provides guardrails that address safety and pedagogical appropriateness.

Dalila: I think it is going to resonate with the educators to know that we considered this and put in technical solutions like the dialogue manager, an in-between layer that can prevent inappropriate conversations or completely off-topic engagements. It is super important to explain these design decisions.

Megan: In terms of data privacy, we also built trust by letting teachers know the agents we developed were running on university-owned servers, and we were the only ones handling the data.

It’s crucial for teachers to know what’s happening to student data. They need to know what’s happening in their classroom, where the data is coming from, where it’s going, and who has their hands on it.

Dalila: Absolutely. So, what’s the takeaway message for educators from our paper, and what is the team hoping to develop in the future?

Megan: I want the teachers to know that the intentional design of AI agents can provide flexible scaffolds for students’ scientific inquiry, support teachers by managing lower-level tasks and providing data insights, and enable educators to focus on key conceptual and socio-emotional guidance.

Right now, we have log files of all student actions and every interaction with the AI agents. We are developing a teacher dashboard to visualize progress, including the evolution of students’ arguments, the kinds of feedback students are getting, and whether it helps the progress, the content of the students’ notebooks that can serve as an artifact of student learning, and a narrative map showing how much of the story they have explored. This data will allow teachers to identify if students are missing key topics or if they need encouragement to explore further.

Dalila: I love the emphasis on supporting the teacher. What it really comes down to is this: When designed with clear intentions, AI can help teachers to facilitate student inquiry in playful learning environments.