Author: Xizi Wang, Ziwei Zhao and David Crandall

Key Ideas

- Real-Time Active Speaker Detection: A robust online method for identifying the active speaker in multi-person conversations, even under challenging visual and acoustic conditions.

- Cross-View Person Matching: A novel approach for matching individuals across synchronized, heterogeneous camera views, including first-person and third-person perspectives.

- Adapting Vision Models to Classroom Settings: Vision techniques tailored to the EngageAI learning environment by collecting annotated classroom data and enabling real-time, deployable inference.

As part of the EngageAI initiative, our team has been developing new techniques for computer vision—technology that helps computers “see”—to better understand social dynamics in real-world, multi-camera environments, such as how teachers and students interact in classrooms. These methods serve as the visual intelligence backbone for SyncFlow, the multimodal analytics pipeline that integrates video, audio, and textual data to support collaborative learning and behavioral research.

From a computer vision perspective, we face the challenge of interpreting rich, unstructured video data from many different sources, ranging from static classroom cameras to wearable devices like GoPros. Our work covers a spectrum of tasks, from basic person detection to higher-level understanding of social interactions, speaker behavior, and attention dynamics. In this blog post, we focused on two key challenges critical to understanding social interaction: real-time active speaker detection and cross-view person matching.

Real-Time Active Speaker Detection

One of the foundational tasks in understanding group conversations is determining who is speaking and when. This task, known as active speaker detection, requires fusing both visual and audio cues. Traditional approaches often rely on batch processing with access to future frames and can be prohibitively expensive for real-time use.

Based on our previous work, Long-Short Context Network (LoCoNet), we developed a technique that performs active speaker detection in real time, enabling responsive and efficient deployment in live environments. Compared to prior state-of-the-art models, including our own work published in the IEEE/CVF Conference on Computer Vision and Pattern Recognition 2024, this online technique runs with nearly a hundred times less computation while maintaining state-of-the-art accuracy on several public benchmarks.

This significant gain in efficiency allows us to run speaker detection on live classroom video, enabling the system to track who is speaking across group interactions without delays or post-processing. This unlocks powerful downstream capabilities like real-time dialogue analysis and conversational behavior tracking.

Cross-View Person Matching

Classroom environments often include multiple synchronized video streams from heterogeneous cameras—some stationary, some moving, and some even worn by teachers or students. A core challenge is cross-view person matching: identifying the same person across these diverse perspectives, even if their appearance differs dramatically or if they are not visible in certain views, such as first-person camera wearers.

Our first attempt at this problem resulted in a CVPR 2024 paper that achieved state-of-the-art performance on a public dataset. However, it fell short when applied to classroom settings due to unrealistic assumptions and limited diversity in the training data.

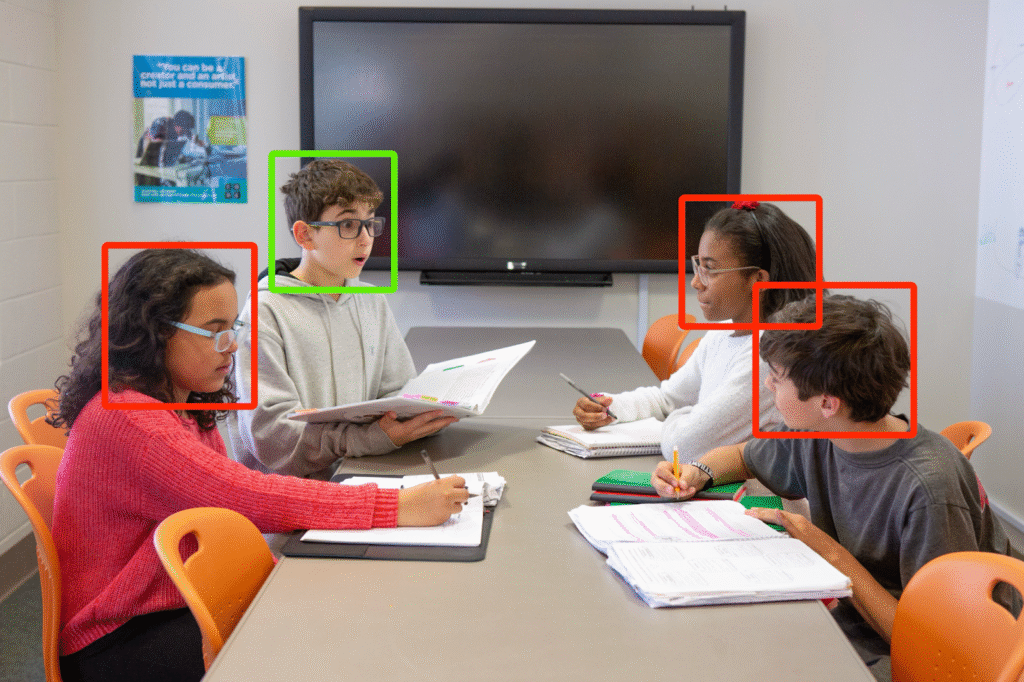

To overcome these limitations, we collected and annotated a new dataset of simulated classroom interactions and developed an improved matching technique designed specifically for this more complex environment. Our new model significantly outperforms the previous approach by leveraging contextual cues such as relative positioning, motion matching, and temporal consistency. This work moves us closer to robust identity tracking across multiple viewpoints—a key requirement for understanding group dynamics.

Adapting Vision Models to the EngageAI Environment

While much of our initial development is benchmarked on public datasets, our long-term goal is to adapt these algorithms for the unique constraints of the EngageAI learning environments. That means dealing with occlusions, partial views, inconsistent lighting, and diverse student behaviors.

To this end, we’ve invested in annotating real and simulated classroom data, making our models more robust and context-aware. We’ve also prioritized real-time inference capabilities, ensuring that these techniques can eventually support live feedback or classroom analytics tools. These efforts are part of a broader push to build computer vision systems that go beyond perception and can understand interaction, engagement, and learning.

Moving forward, we plan to continue this cycle of public benchmarking followed by classroom adaptation, expanding into new threads like multi-view attention estimation and object-agnostic action recognition. Together, these vision modules will help turn raw video into meaningful insights about how people engage, collaborate, and learn.

To stay updated on our work and Engage AI’s initiatives, follow Engage AI on Twitter/X and LinkedIn.